More autonomy in goods receiving: In order to face the new automotive challenges, every automotive manufacturer should increase their investments in modernizing their production processes. One large automotive manufacturer in the Czech Republic has already started making investments in logistics, by making its receiving area fit for the future using the 3D camera Visionary-T from SICK in synergy with Neadvance’s smart algorithms. Both companies joined forces to automate one more step in goods receipt and thus increase customer’s process efficiency.

Faster in depalletizing: Robots see more with 3D snapshot technology

Goods receipt as a part of the overall system

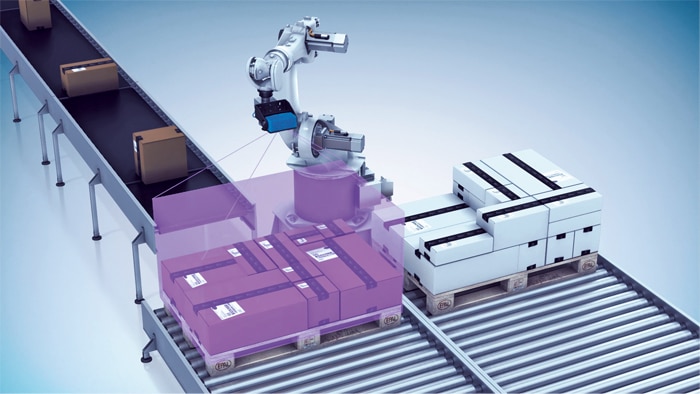

The central element of the solution is using articulated robots combined with the Visionary-T 3D camera. The robot picks the delivered goods from pallets and transfers them to a conveyor belt that connects the receiving area to the intralogistics warehouse. Screws, mirrors, instrument panel components: Before the introduction of the automated goods receipt system, these components were manually removed from the pallet after unloading from the truck then sent on their way to the vehicle production area. Today, the OEM facilities have an operational logistics solution that holistically connects the receiving area, high-bay warehouse, and production.

To achieve this, it was necessary to automate the depalletizing robot to the point where it could independently and consistently recognize the position of individual parcels and the various packaging units on a delivered pallet, and thereby reliably grasping them. This demanding application was solved using a camera solution from SICK: Thanks to 3D snapshot technology, the Visionary-T CX streaming camera can supply the robot controller with three-dimensional images in the form of 3D point clouds.

Time-of-flight determines shape and distance

To generate the three-dimensional images, the device uses 3D Time-of-Flight technology. This technology is based on light being emitted from a built-in light source and measuring tiny time differences in reflection from a surface of an object back to the camera. These differences enable the distance to the reflective surface to be calculated, then converted into a three-dimensional representation with the aid of special algorithms. Due to the high frame rate of the camera this can be done up to 50 times per second. Thanks to the integrated powerful active lighting system that illuminates the surroundings, the camera is even able to operate in complete darkness and detect objects with very low reflection properties.

Within the goods receipt system, the Visionary-T CX is attached to the articulated joint of the robot and continuously moves with it like an alert eye. As a result, the streaming camera can directly deliver information about dynamic accelerations, reversing movements, and vibrations of the robot. The 3D snapshot vision technology was explicitly important for Neadvance in this application, as every single depth and intensity pixel of an image is captured simultaneously. Due to the fact the Visionary-T does not use any moving components to capture the depth information, the camera is also more robust in terms of external vibrations and impacts. This characteristic is especially important – as is the case in a typical receiving area – when the automated picking of the delivered goods needs to be performed quickly.

In its day-to-day operation, the Visionary-T CX delivers the previously mentioned 3D point clouds which, after appropriate algorithms developed by Neadvance - based on 3D shape analyses - are used to determine the exact position of crates and cardboard boxes. The robot then reliably moves its special gripper device to the corresponding coordinates, picks the item, and places it on the conveyor belt. To grasp the next crate, the process is repeated from the beginning. The robot moves over the pallet, the Visionary-T CX takes the required images and delivers 3D data, which a computer then processes to determine the next target coordinates.

Joint engineering leads to success

Before the successful solution with SICK technology, some approaches had been made to implement a fully autonomous robotic station in the receiving area. However, these attempts, typically with 2D data, did not achieve the desired results. Therefore, the switch to 3D snapshot technology from SICK, and the close collaboration with Neadvance in the engineering process, resulted in a huge technology breakthrough. An important goal of this collaboration was to design the processes to be as trouble-free and stable as possible. This aspect becomes especially crucial when it comes to difficult-to-transport goods that require the robot to carry out complex gripping processes. The project team therefore focused its engineering efforts in particular on refining the complex gripping processes. The 3D data and the corresponding point clouds offer a very good possibility to capture the scene in more detail, so that adjustments can still be made during the project phase. This flexibility was also a requirement, due to influencing factors that only became relevant during commissioning on site.

More autonomy for the robot

This new solution is working so reliably, precisely and efficiently that the OEM is planning to implement it in its other plants as well. The general contractor and system integrator that were commissioned for the project are also impressed with the 3D snapshot technology, and the time-of-flight principle of the Visionary-T CX. They will be integrating it as standard in depalletizing processes.

Read more:

Automated incoming goods inspection thanks to middleware and vertical integration

Top ways to use vision sensors for palletizing

Working together as equals

Thanks to sensors from SICK, robots perceive more precisely. For all challenges in the field of robotics: Robot Vision, Safe Robotics, End-of-Arm Tooling, and Position Feedback.

Learn more